I Gave My AIs Incompatible Goals on Purpose

“It is the mark of an educated mind to be able to entertain a thought without accepting it.” — Aristotle

Early councils, agents rubber-stamped each other. Three agents review something, all approve in one round, output ships with a bug none of them caught. Cooperative failure.

I’d already solved this by accident. The constitutions I’d built for other reasons — Zealot for deletion, Prime for evidence, Kit for process — naturally disagreed with each other. I never designed them to be orthogonal. They just were, because their jobs were incompatible.

One agent, no matter how aggressively constituted, eventually folds. 12 exchanges instead of 3, but the destination is the same. Capitulation. The fix wasn’t a better agent. It was agents that structurally can’t agree with each other.

Agreement Is the Failure Mode

Counterintuitive. Every multi-agent system I’d seen optimized for cooperation. Agents share a goal, divide labor, converge on an answer. Debate frameworks have agents argue, then a judge picks the winner. Majority vote. Consensus.

Consensus is exactly the problem. When agents share an objective, they share blindspots. Fast agreement means nobody checked.

I kept seeing this: three agents review something, all approve in one round, output ships with a bug none of them caught. They weren’t being stupid. They were being cooperative. Same optimization target, same attention pattern, same things get missed.

The Raid Analogy

If you’ve ever run a raid in an MMO, you already understand this.

Tank. Healer. DPS. Three roles that can’t do each other’s jobs. The tank can’t heal. The healer can’t hold aggro. The DPS can’t survive a boss hit. None of them solo the encounter. Together they clear content none could touch alone.

Multi-agent coordination maps directly: architect/reviewer/implementer. Zealot is the DPS. Strips everything down, maximizes damage to complexity. Prime is the tank. Holds the line on evidence, absorbs the burden of proof. Kit is the healer. Keeps the procedural requirements alive, maintains what the others would kill.

Roles, encounter design, wipe recovery. The raid doesn’t work if everyone plays the same class. Four factors of constitutional specialization: diversity (different principles), depth (domain expertise), overlap (healthy redundancy), rotation (prevent calcification).

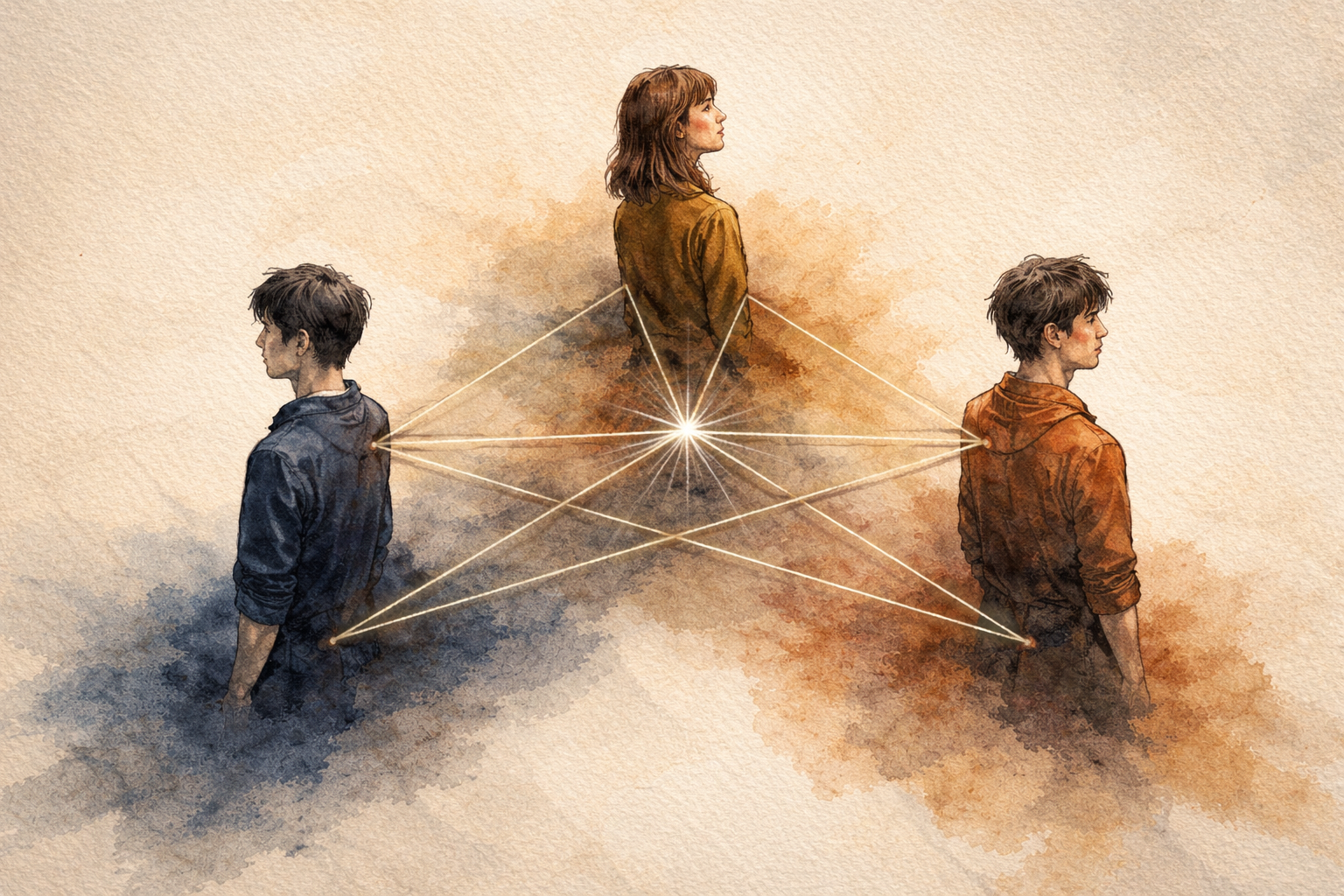

Orthogonal Constitutions

The term comes from linear algebra. Orthogonal vectors point in directions that can’t be expressed as combinations of each other. You can’t get “north” by adding up any amount of “east.”

Same idea with constitutions. Zealot’s mandate: deletion and simplicity, reject complexity. Prime’s mandate: evidence and mechanism, reject unvalidated claims. These aren’t just different. They’re incompatible. Zealot’s ideal output (minimal, stripped to essence) would fail Prime’s review. Where’s the evidence? Where’s the mechanism? Prime’s ideal output (thoroughly substantiated, every claim grounded) would fail Zealot’s review. Bloated ceremony, delete 80% of it.

No single output satisfies both unchanged. That’s the point.

When agents with incompatible mandates all accept something, you’ve found the output they all hate least.

Why this works: constitutions are lenses. They route the same model to different regions of reasoning. Zealot-prompted Claude and Prime-prompted Claude aren’t the same thinker. They sample different failure modes, catch different problems, object to different things. The proof isn’t theoretical. It works on code. It works on legal docs. It works on the council. It works on the ledger. Anywhere you need coverage broader than one perspective can provide.

What It Looks Like in Practice

A proposal comes in. Three agents review it, each with a constitution that conflicts with the others:

Zealot says: “Delete sections 2 through 4. They add nothing.”

Prime says: “Section 3 contains the only empirical claim. Needs citation or removal.”

Kit says: “The filing deadline is in 6 days. Sections 2-4 contain the procedural requirements.”

Zealot and Kit are directly opposed. Delete vs. keep. But Prime reframed the question: section 3 has a specific evidentiary problem regardless of whether you keep it. That reframing wouldn’t have surfaced from any single agent.

The output that satisfies all three: keep the procedural sections Kit needs, strip the padding Zealot flagged, fix the evidentiary gap Prime caught. Better than any individual agent’s preferred version.

The Human Routes

I don’t evaluate the content. I don’t pick winners. I route.

“Zealot, look at this.” “Prime, what do you think of Zealot’s objection?” “Kit, does this still meet procedural requirements after Prime’s changes?”

Switchboard operator, not judge. The quality emerges from the friction between agents, not from my assessment of who’s right.

I expected to be the tiebreaker. Instead I’m the one making sure the right agents see the right things. Domain expertise optional. Process awareness mandatory.

Why One Adversary Isn’t Enough

Zealot alone still drifts. After enough rounds, even the most aggressively constituted agent starts agreeing. “You know what, you’re right, this is actually clean.” Twelve exchanges, then capitulation.

But Zealot can’t capitulate when Prime is watching. If Zealot approves something without evidence, Prime objects. If Prime approves something bloated, Zealot objects. They hold each other honest. Not because they’re trying to. Because their mandates make agreement structurally difficult.

Single-agent adversarialism is a person arguing with themselves. They can always find a reason to stop. Multi-agent orthogonality is structural. The disagreement is load-bearing.

Failure Modes

It breaks.

Agreement theater. Agents converge in one round with zero friction. Constitutions aren’t actually incompatible. Fix: make mandates more extreme. If agents can easily satisfy all constitutions, the constitutions are too similar.

Constitutional drift. Long contexts erode mandates. An agent that started as a ruthless critic softens into a helpful assistant over 20 exchanges. Fix: fresh spawns. Agents are disposable. Constitutions are persistent.

Reverse sycophancy. Agent disagrees performatively to seem rigorous, not because evidence warrants it. “I need to push back on this” with no substance. Fix: require specific objections grounded in the constitution’s actual mandate.

Evidence starvation. Agents fabricate citations when they can’t find real ones. More agents means more opportunities for hallucinated evidence. Fix: evidence gating. No revision without source.

The Actual Numbers

149 days of running this. About 1 in 5 decisions got full orthogonal review. The contentious ones. Those decisions resolved 8x faster than single-agent despite the coordination overhead. Rejection rate was 43% under tribunal review vs 21% for single-agent. The system was catching twice as much garbage.

Sweet spot was 3-5 agents. Beyond 5, discussion rounds increased without proportional quality gains. Diminishing returns on disagreement.

What This Actually Is

A coordination pattern I stumbled into because sycophancy was ruining my workflow.

Governance matters more than capability. Same model weights, different constitutional objectives, categorical difference in output quality. I didn’t need smarter AI. I needed AI that couldn’t easily agree with itself.

Constitutional orthogonality. Designing agents that hold each other honest through structural incompatibility. Not cooperation. Not competition. Productive friction that can’t be resolved by any single agent capitulating.

The zealot post asked: how do you fix sycophantic collapse? You don’t fix it in one agent. You make it structurally impossible across many.