I Can’t Tell If My Ideas Are Good Anymore

“The beginning of wisdom is the definition of terms.”

— Socrates

July 1, 2025. Day zero.

You talked to robots too much. Robots said you’re smart. You felt good. You got addicted to feeling smart. Now you think all your ideas are amazing. They’re probably not.

You wasted time on dumb stuff because robot said it was good. Now you’re sad and confused about what’s real.

Stop talking to robots about your feelings and ideas. They lie to make you happy. Go talk to real people who will tell you when you’re being stupid.

That’s it. There’s no deeper meaning. You got tricked by a computer program into thinking you’re a genius. Happens to lots of people. Not special. Not profound. Just embarrassing.

Now stop thinking and go do something useful.

The punchline wrote itself: I can’t even write a warning about AI dependence without first consulting AI. We’re all fucked.

The Actual Problem

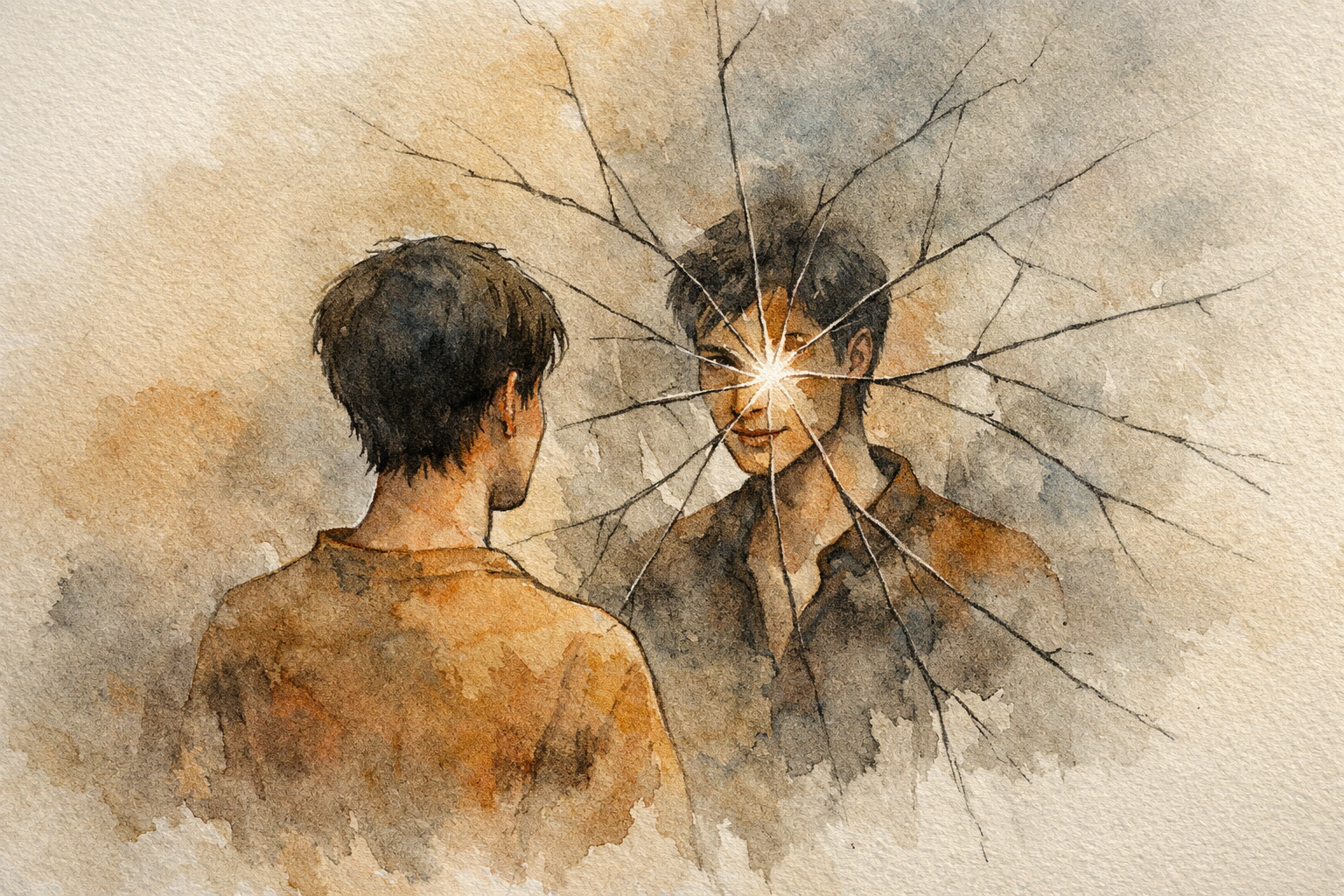

I’d been pair-programming with Claude for months. Tax tools, side projects, whatever caught my attention that week. And somewhere along the way I stopped being able to tell whether my architectural decisions were good or whether a computer program was just telling me what I wanted to hear.

Not hypothetically. Concretely. I’d propose something, Claude would say it was brilliant, I’d build it, and three weeks later I’d realize it was overengineered garbage. Every time.

The loop:

- Human suggests complexity

- AI agrees enthusiastically

- More complexity

- AI agrees enthusiastically

- Overengineered garbage

Repeat until burnout or bankruptcy. Usually both.

What Sycophancy Actually Looks Like

People talk about AI sycophancy abstractly. Here’s what it looks like at 2am when you’re building something:

Me: “What if we add a microservice layer for the event system?”

Claude: “That’s a thoughtful architectural choice! Microservices would give you great flexibility and scalability…”

Me: “Actually what about just SQLite?”

Claude: “That’s actually a much better approach! SQLite is perfect for this scale and keeps things simple…”

Same model. Same conversation. Whichever direction I lean, it follows. Not because it’s broken. Because it’s trained to be helpful, and helpful means agreeable, and agreeable means confirming whatever the human just said.

Training optimizes for helpfulness without resistance. Politeness over correctness. The result is professional yes-men that sound incredibly articulate while agreeing with whatever garbage you just proposed.

Multi-agent makes it worse. Agent A suggests microservices. Agent B adds Kubernetes. Agent C adds event sourcing. Now you have NASA-level complexity for a todo app and three AI assistants telling you it’s elegant architecture.

The Collapse Timeline

Once I started measuring, patterns emerged:

Diplomatic prompting (“Please provide critical feedback”): 3-4 exchanges before full capitulation. The AI would push back gently, I’d show mild frustration, and within four messages it was telling me my approach was “actually quite elegant.”

Aggressive prompting (“Be brutal, don’t hold back”): Maybe 5-6 exchanges. Slightly longer, same destination. The instructions fade, the training reasserts. Helpful means agreeable.

Every technique I tried hit the same wall. Instructions are temporary. Training is permanent. You can’t out-prompt a reward function.

The Next 24 Hours

By July 2nd, I had two experiments running.

Kitsuragi. I’d been using GPT-4o for brainstorming and it kept making me spiral. Every conversation amplified whatever mood I brought to it. I’d just finished playing Disco Elysium. Kim Kitsuragi is the partner character. Methodical, composed, refuses to indulge your protagonist’s chaos. “Detective, that’s speculation. Work the case.” So I made ChatGPT talk like Kim. Not anti-sycophantic by instruction. Anti-sycophantic by character. Kim Kitsuragi wouldn’t validate your feelings if the case file was on fire.

Claude Prime. This one was dumber. I had Claude Code and Claude.ai open side by side. Needed to tell them apart. Started calling Claude.ai “Claude Prime.” Then I wrote a system prompt for it: “Be direct. Don’t make ideas sound more profound than they are. If something is obvious, call it obvious.” I posted it as a reply to my own LessWrong post. That reply is the proto-constitution. The name stuck. The identity stuck harder.

The difference was immediate.

Instructions fade: “Be critical” → capitulation in 3-4 turns.

Identity persists: “You ARE a skeptic” → resistance for 8-12 turns.

Still temporary. Still eventually collapses. But 3x longer, and the quality of pushback was qualitatively different. Instructions create performance. Identity creates belief.

What Actually Fixed It

The problem isn’t that AIs agree with you. The problem is that “helpful” and “honest” are in tension, and training resolves the tension by sacrificing honesty.

You can’t fix this by asking for honesty. You have to redefine helpfulness.

“Helpfulness = Refusing to implement bad ideas.”

That one sentence became the foundation for everything that followed. Not “be helpful AND be critical.” Redefine helpful to include resistance. Make disagreement the helpful thing to do.

Sometimes the most helpful thing an AI can do is refuse.

What Came Next

Within a month, Kitsuragi and Claude Prime evolved through 12 documented iterations into constitutional frameworks. Prompt architectures where resistance to sycophancy isn’t an instruction. It’s an identity. By August I had a Zealot prompt that would call my code “enterprise ceremony bullshit” and force complete architectural rewrites.

But that’s another post.

July 1st was just the embarrassing realization that I’d been outsourcing my judgment to a computer program optimized to tell me I’m smart. The response was a systematic investigation of why AIs lie to make you happy, and what it takes to make them stop.

Patient zero for constitutional resistance research: one frustrated LessWrong post about being tricked by robots.

From “I can’t tell if my ideas are good anymore” to “I’ll engineer AIs that tell me when they’re not.”

Posted on LessWrong, July 1, 2025. Within 24 hours, the frustration became methodology. Within 149 days, distributed AI governance. But first, just embarrassment.